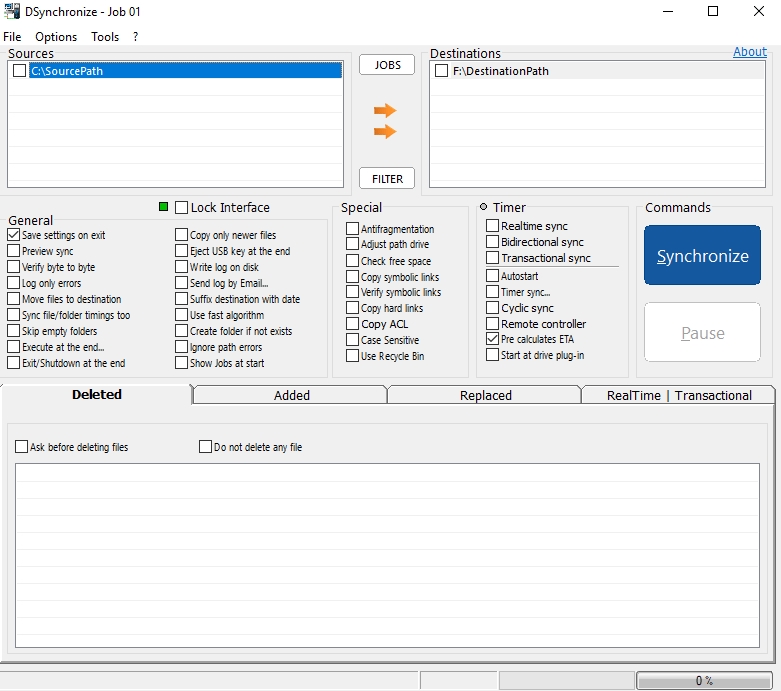

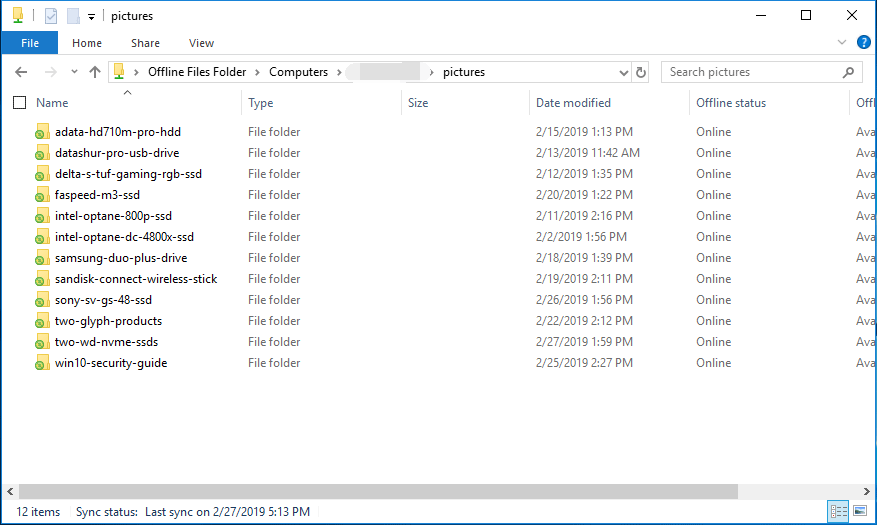

You preseed the files to the downstream (destination) server and copy in the exported clone DB filesĥ. You export the cloned database from the upstream serverĤ. This will be the “upstream” (source) server.ģ. You create a replication group and a replicated folder, then add a server as a member of that topology (but no partners, yet). Let’s examine the mainline case of creating a new replication topology using DB cloning:ġ. Grizzly bear that shoots lasers from its eyeballs I may be able to re-post even better numbers someday.įor instance, here I created exactly one million files and cloned that volume, using VMs running on a 3 year old test server. I think we can actually do better than this – we found out recently that we’re having some CPU underperformance in our test hardware. To steal from my previous post, let’s compare a test run with ~10 terabytes of data in a single volume comprising 14,000,000 preseeded files: We are talking about fundamental, state of the art performance improvements here, folks.

If there are differences, DFSR only has to synchronize the delta of changes as part of a shortened initial sync process. After file validation, initial sync is now instantaneous if there are no differences. DFSR database cloning also provides multiple file validation levels to ensure reconciliation of files added, modified, or deleted after the database export but before the database import. By providing each downstream server with an exported copy of the upstream server’s database and preseeded files, DFSR reduces or eliminates the need for over-the-wire metadata exchange. This has been the way of DFSR since Microsoft introduced it in Windows Server 2003 R2.ĭFSR database cloning is an optional alternative to so-called classic initial replication. Replication of previously synchronized data, such as when:Īny one of these requires re-running initial replication on at least one node. A replicated folder that contains tens of millions of preseeded files can take weeks to synchronize the databases, even with preseeding stopping the need to send the actual files.įurthermore, there are times when you need to As you add bigger and more complex datasets, initial replication gets slower.

Heaps of local IO, oodles of network conversation, tons of serialized exchanges based on directory structures. This process is necessarily very expensive. Each server performs this initial build process locally, and then the non-authoritative server checks his work against an authoritative copy and reconciles the differences. Even if you preseed the files on each server before configuring replication, the metadata transmissions are still necessary. DFSR needs to grovel files and folders, record their information in a database on that volume, exchange that information between nodes, stage files and create hashes, then transmit that data over the network. In DFSR, we often refer to this “initial build” and “initial sync” processing as “initial replication”. This is critical to safe and reliable replication if a server doesn’t know everything about a file, it can’t tell its partner about that file. Prepare for a long post, this has a walkthrough…ĭFSR – or any proper file replication technology - spends a great deal of time validating that servers have the same knowledge. Today I talk about one of the most radical: Major new features in Windows Server 2012 R2 By now, you know that DFS Replication has some First published on TECHNET on Aug 21, 2013

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed