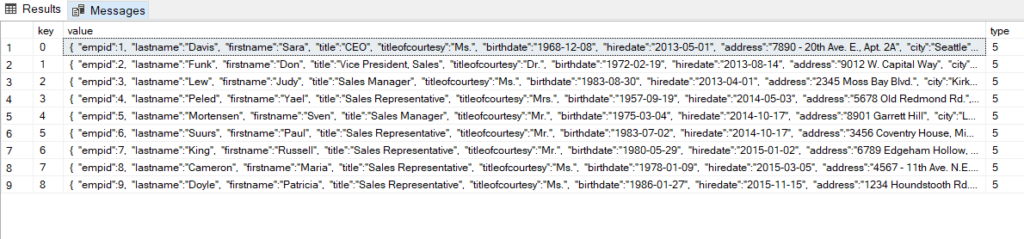

There are a few other reasonable default options for the representation of a column value in XML. In most cases, the choice is not relevant, so we can pick both: an array, user defined type, etc.), then elements are a better choice. Scalar values can easily be represented as attributes. We have a bit more choices of how to represent SQL column values in XML. The row type is defined by what table / array the JSON object is contained in.Ĭolumn values are XML elements or attributes, or JSON attributes Unlike in XML, there is no such thing as an element name, so the row is “anonymous”. The name of the table the row stems from.The question is only what the element name should be. For example:Īs we’ve already seen above, a SQL row is represented in XML using an element. With JSON, the obvious choice of data structure to represent a table is an array. The distinction of whether a wrapper element is added is mostly significant when nesting data. The most natural way to represent a set of data in XML is a set of elements using the same element name, optionally wrapped by a wrapper element. sets of data) are not a foreign concept to both XML and JSON documents. GROUP BY and ORDER BY can be seen as a way to nest data.Column values are XML elements or attributes, or JSON attributes.Tables are XML elements, or JSON arrays.But what is the general case? Let’s summarise a few key parallels between SQL result sets, and XML/JSON data structures: SQL Server’s approach is much more usable in the general case. The advantages of both approaches are clear. For example, it is possible to map column a to attribute x and column b to a nested XML element y very easily. JOIN film_actor fa ON a.actor_id = fa.actor_idĪs could be seen in the above teaser, the SQL Server syntax is far less verbose and concise, and it seems to produce a reasonable default behaviour, where the Db2, Oracle, PostgreSQL (and SQL Standard) SQL/XML APIs are more verbose, but also more powerful. NVARCHAR works with X feature i.e.SELECT a.first_name, a.last_name, f.title The main reason for keeping the JSON document in NVARCHAR format is for Cross feature compatibility. If the JSON data is not huge, we can go for NVARCHAR(4000), or else we can go for NVARCHAR(max) for performance reasons. By using nvarchar(max) data type, we can store JSON documents with a max capacity of 2 GB in size. Raw JSON documents have to be parsed, and they may contain Non-English text. JSON documents can be stored as-is in NVARCHAR columns either in LOB storage format or Relational storage format. Python | Arithmetic operations in excel file using openpyxl.Python | Plotting charts in excel sheet using openpyxl module | Set 3.Python | Plotting charts in excel sheet using openpyxl module | Set – 2.Python | Plotting charts in excel sheet using openpyxl module | Set – 1.Python | Adjusting rows and columns of an excel file using openpyxl module.Reading an excel file using Python openpyxl module.Python | Writing to an excel file using openpyxl module.Python | Create and write on excel file using xlsxwriter module.Python | Database management in PostgreSQL.Inserting variables to database table using Python.

SQL using Python | Set 3 (Handling large data).ISRO CS Syllabus for Scientist/Engineer Exam.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed